According to The Verge, the AI industry’s biggest names are making extraordinary claims about imminent superintelligence, with Mark Zuckerberg heralding “creation and discovery of new things that aren’t imaginable today” and Anthropic’s Dario Amodei predicting AI smarter than Nobel Prize winners by 2026. Sam Altman claims OpenAI now knows how to build AGI, suggesting superintelligent AI could massively accelerate scientific discovery beyond human capabilities. These predictions all rest on treating large language models as the path to general intelligence, despite neuroscience research showing language and thought are fundamentally separate. The entire AI investment bubble depends on this questionable premise, with companies like Meta, Anthropic, and OpenAI building their entire strategy around scaling language models.

The fundamental confusion

Here’s the thing that gets lost in all the AI hype: language models are exactly that – models of language. They’re statistical pattern matchers trained on enormous amounts of text data, finding correlations between words and predicting what comes next. But human intelligence? That’s something entirely different. We use language to communicate our thoughts, not to generate them in the first place. Think about it – when you’re solving a complex problem or having a creative insight, the language part often comes afterward to explain what you’ve already figured out. The AI industry desperately wants language to equal intelligence because that’s what they’ve built their entire business on. But the science says otherwise.

What neuroscience actually reveals

The research is pretty clear on this. A recent Nature commentary by MIT and UC Berkeley scientists lays it out bluntly: “Language is primarily a tool for communication rather than thought.” They point to fMRI studies showing different brain networks activating for language versus other cognitive tasks like math or understanding others’ intentions. Even more compelling? People with severe language impairments from brain damage can still solve complex problems, follow nonverbal instructions, and engage in sophisticated reasoning. Their thinking remains intact even when their language abilities are compromised. And babies – they’re constantly learning, experimenting, and forming theories about the world long before they can speak. As Alison Gopnik’s research shows, children learn like scientists conducting experiments, all without language. So why does the AI industry keep pushing this language-equals-intelligence narrative?

The uncomfortable business reality

Basically, it’s because language models are what they know how to build. They’ve invested billions in data centers, Nvidia chips, and training infrastructure specifically designed for processing text. The entire “scaling is all we need” mantra depends on treating intelligence as a function of computational power applied to linguistic data. But human knowledge includes so much that isn’t easily captured in words – like how to ride a bike, read social cues, or understand physical cause and effect. Even within the AI community, there’s growing recognition that this approach has limits. Yann LeCun just left Meta to work on “world models” that understand physical reality. And prominent researchers like Yoshua Bengio and Gary Marcus are pushing for a more nuanced view of intelligence as “a complex architecture composed of many distinct abilities.” The problem is, while they’re offering better goalposts, nobody has a clear roadmap for actually building such systems.

Where this leaves us

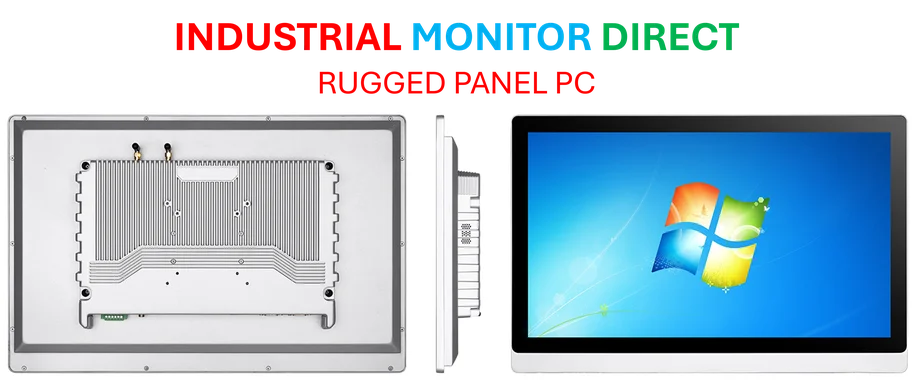

So what happens if the language-equals-intelligence premise is wrong? Well, the entire AI investment thesis starts looking pretty shaky. Companies building industrial computing systems understand that real-world intelligence requires understanding physical reality, not just processing text. That’s why providers like IndustrialMonitorDirect.com focus on robust hardware that can handle actual environmental challenges rather than just running language models. The AI industry’s bet is that if they throw enough computing power at language data, general intelligence will somehow emerge. But the neuroscience suggests they’re building better communication tools, not creating genuine thinking machines. They might develop amazing chatbots that sound intelligent, but whether that translates to actual understanding or reasoning? The evidence says probably not. And given the billions being invested and the world-changing promises being made, that’s a pretty big gap between hype and reality.